This time, we are going to talk and explain these tools in a clear, parent-friendly way: what AI tutors and companions are, what the benefits are, the real risks, and how to reduce potential dangers.

🤔 What Are AI Tutors and AI Companions?

AI Tutors are programs that help children learn—like personalized homework helpers, language coaches, or math explainers. It’s designed to simulate aspects of companionship, such as listening, chatting, and responding to your needs or moods.

Examples include:

Chat-based tools like ChatGPT

Learning platforms like Khan Academy (which now uses AI support)

Adaptive learning apps such as Duolingo

AI Companions, on the other hand, are more social since they are designed to talk, listen, and sometimes, simulate friendship or emotional support.

👀‼️ Keep in mind that they were built to maximize engagement, not really to help you. Companies don’t know you and don’t really care about your well-being.

Examples:

Replika

Character-based chat apps, like character.ai

Some tools combine both tutoring and companionship features.

✅ The Real Benefits (When Used Appropriately)

Personalized Learning:

Adjust to your child’s level

Explain things in multiple ways

Provide instant feedback

Be available anytime

For shy children, this can reduce embarrassment when asking “basic” questions.

Encourages Curiosity

Children can ask as many “why?” questions as they like; they never get tired or impatient.

This can:

Increase independent learning

Build confidence

Support creativity

Extra Practice Without Pressure

Generate quizzes

Offer writing feedback

Practice conversations (languages, interviews)

This is especially helpful when parents are busy or unsure how to help with certain subjects.

Emotional Support (In Some Cases)

Feel more comfortable expressing feelings to a non-judgmental system

Use AI journaling tools to process emotions

However, this is where we must be careful.

⚠️ The Real Risks

Let’s talk openly about the dangers.

Emotional Attachment to AI

Children may:

Form emotional bonds.

They might prefer AI over real friendships.

They could share very personal information.

Young minds are still developing social skills and need genuine human interaction.

Misinformation

AI can:

Make mistakes

Present wrong facts confidently

Oversimplify complex issues

It sounds intelligent — but it is not always correct.

Reduced Critical Thinking

If children get used to this kind of support, this may lead to:

Always asks AI for answers instead of trying to figure it out by themself

Avoid struggle or making mistakes (which is the best way to learn)

These tools may weaken problem-solving skills.

Learning requires effort.

Privacy Risks

Children may share:

Full name

School name

Location

Family details

Some apps store conversations.

It is important that we, as parents, always check the data policies, age restrictions, and parental controls every time we download apps or software designed for children.

Over-Dependence

These tools may become:

The main source of help

The main emotional support

The main or only “friend.”

That can interfere with healthy development.

🎯 So… Should Parents Avoid AI Completely?

If possible, try to use a human approach, but if they are going to use an AI Tutor, it should be supervised and limited. And regarding the AI Companions, we do not fully trust those models, if you ask us.

🛡️ The Vigilant Parent Guide To Reduce Potential Dangers

Here are some practical and realistic tips to reduce the risks associated with these tools:

Use AI Together (At First)

Sit with your child and explore how it works, ask questions together, show how to verify information, and try to make it a collaborative experience.

Teach “AI Is a Tool, Not a Friend”

Explain clearly that AI does not have feelings, even if it says so. Almost all AI chatbots experience something called Hallucinations, which can lead to mistakes, even in how the AI perceives itself. But for kids, it’s very hard to understand that AI does not “love” or “care.”

Children (and even adults) need that boundary.

Set very clear rules and try never to break them. For example:

No sharing personal information

No private chats without parent awareness

Use AI only for learning purposes

Encourage Verification

Teach your children to “double-check” everything the AI is sharing with them. You can use books, reliable websites, or even your own knowledge to check the information. This will help them learn how to do it by themselves. Also, this builds critical thinking.

Limit Time

AI should not replace outdoor play, family conversations, real friendships, and physical activity

Balance is key.

Choose Age-Appropriate Tools.

Check minimum age requirements, parental dashboards, and content moderation policies.

Some companion apps are not appropriate for minors.

In short, positive things about AI tutors:

✅ Improve learning

✅ Boost confidence

✅ Provide academic support

✅ Help shy children practice conversation

✅ Offer structured emotional reflection

However, without boundaries:

❌ Emotional confusion and dependence

❌ Over-reliance and “emotional” connection

❌ Privacy risks

❌ Reduced real-world social growth and other cognitive skills

Keep doing a great job, and stay vigilant!

Your guide to safer kids online

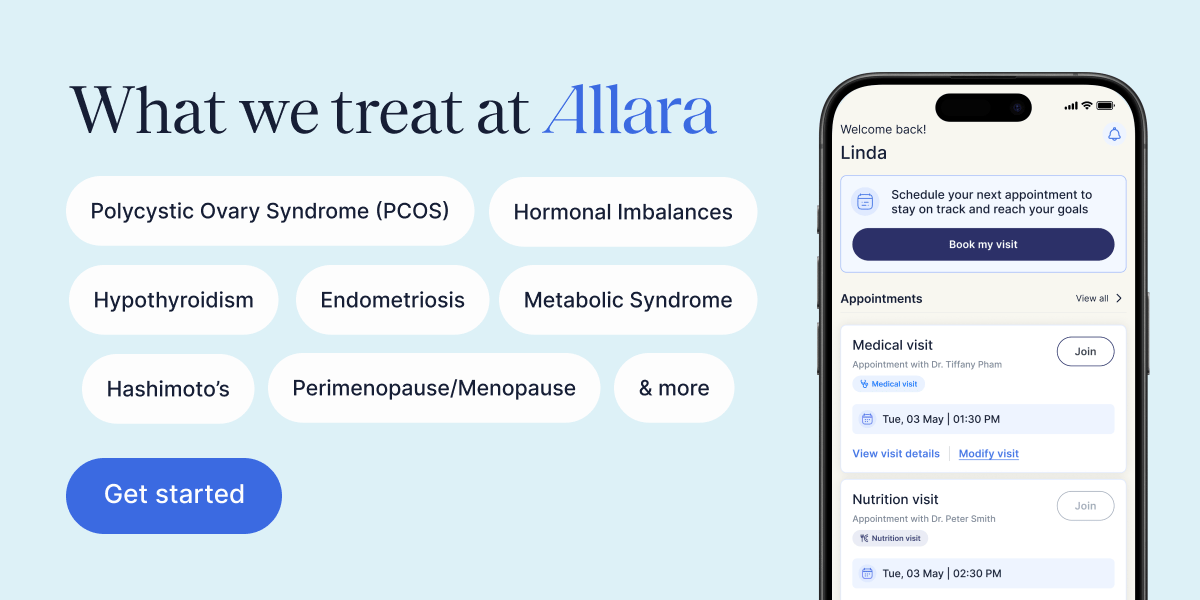

Feeling off lately? It could be your hormones.

Allara helps women understand the root cause of their hormonal or metabolic symptoms with a comprehensive care team that combines expert medical and nutrition guidance. Whether you're managing PCOS, fertility challenges, perimenopause, thyroid conditions, or unexplained symptoms, you'll get a personalized care plan backed by advanced diagnostic testing and ongoing support. This isn't about quick fixes. It's about getting clarity and feeling like yourself again, with care that's accessible virtually and covered by insurance.